Welfare economics: an introduction (part 1 of a series)

This is the first part of a series. See parts 2, 3, 4, and 5.

Commenters at interfluidity are usually much smarter than the author whose pieces they scribble beneath, and the previous post was no exception. But there were (I think) some pretty serious misconceptions in the comment thread, so I thought I’d give a bit of a primer on “welfare economics”, as I understand the subject. It looks like this will go long. I’ll turn it into a series.

Utility, welfare, and efficiency

Our first concern will be a question of definitions. What is the difference between, and the relationship of, “welfare” and “utility”? The two terms sound similar, and seem often to be used in similar ways. But the difference between them is stark and important.

“Utility” is a construct of descriptive or “positive” economics. The classical tradition asserts that economic behavior can be usefully described and predicted by imagining economic agents who rank the consequences of possible actions and choose the action associated with the highest-ranking. Utility, strictly speaking, has nothing whatsoever to do with well-being. It is simply a modeling construct that (it is hoped) helps organize and describe observed behavior. To claim that “people value utility” is a claim very similar to “nature abhors a vacuum”. It’s a useful way of putting things, but nature’s abhorrence is not meant to signal an actual discomfort demanding remedy in an ethical sense. Subjective well-being, of an individual human or of the universe at large, is simply not a topic amenable to empirical science. By hypothesis, human agents “strive” to maximize utility, just as molecules “strive” to find lower-energy states over the course of a chemical reaction. Utility is important not as a desideratum of scientifically inaccessible minds, but as a tool invented by economists, a technique for describing and modeling human behavior that may (or may not!) turn out to be useful.

“Welfare” is a construct of normative economics. While “utility” is a thing we imagine economic agents maximize, “welfare” is what economists seek to maximize when they offer policy advice. There is no such thing as, and can be no such thing as, a “scientific welfare economics”, although the discipline is still burdened by a failed and incoherent attempt to pretend to one. Whenever a claim about “welfare” is asserted, assumptions regarding ethical value are necessarily invoked as well. If you believe otherwise, you have been swindled.

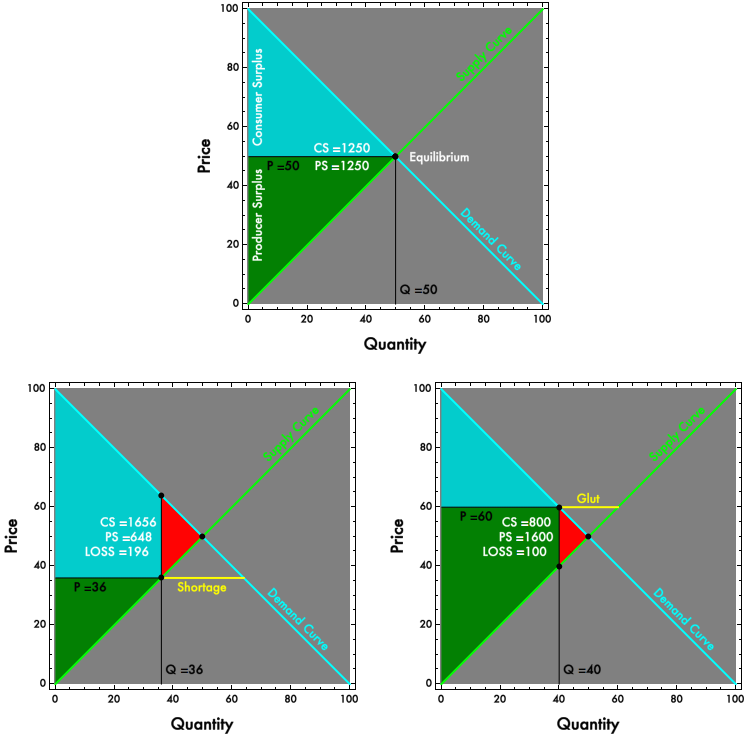

If claims about welfare can’t be asserted in a value-neutral way, then neither can claims of “efficiency”. Greg Mankiw teaches that “[under] free markets…[transactors] are together led by an invisible hand to an equilibrium that maximizes total benefit to buyers and sellers”. That assertion becomes completely insupportable. Even the narrow and technical notion of Pareto efficiency, often omitted from undergraduate treatments, is rendered problematic, as nonmarket allocations can also be Pareto efficient and value-neutral ranking of allocations becomes impossible. Welfare economics is the very heart of introductory economics. Market efficiency, deadweight loss, tax incidence, price discrimination, international trade — all of these topics are diagrammed and understood in terms of what happens to the area between supply and demand curves. If we cannot redeem those diagrams, all of that becomes little more than propaganda. (We’ll think later on about how we might redeem them!)

The prehistory of a problem

The term “utility” is associated with Jeremy Bentham’s “utilitarianism”, which sought to provide “the greatest good for the greatest number”. Prior to the 20th Century, utility was an intuitive quantifier of this “goodness”. It represented an cardinal quantity — 15 Utils is better than 10 Utils, and we could think about comparing and summing Utils enjoyed by multiple people. Classical utilitarianism made no distinction between utility and welfare. Individuals were hypothesized to maximize something that could be understood as “well-being” in a moral sense, this well-being was at least in theory quantifiable and comparable across individuals. “Maximizing aggregate utility” and “maximizing social welfare” amounted to the same thing. Utility had a meaningful quantity, it represented an amount of something, even if that something was as unobservable as the free energy in a chemist’s flask.

The 20th Century saw an attempt to “scientificize” economics. The core choice associated with this scientificization was a decision to reconceive of utility as strictly “ordinal”. A posited value for utility was to serve as a tool for ranking of potential actions, significant only by virtue of whether it was greater than or less than some other value, with no meaning whatsoever attached to the distance between. If an agent must choose between a chocolate bar and a banana, and reliably goes for the Ghirardelli, then it is equivalent to attribute 3 Utils or 300 Utils to the candy, as long as we have attributed less than 3 Utils to the banana. The ordering alone determines agents’ choices. Any values that preserve the ordering are identical in their implications and their accuracy.

There is nothing inherently more scientific about using an ordinal rather than a cardinal quantity to describe an economic construct. Chemists’ free energy, or the force required to maintain the pressure differential of a vacuum, are cardinal measures of constructs as invisible as utility and with a much stronger claim to validity as “science”.

The reconceptualization of utility in strictly ordinal terms represented a contestable methodological choice. It carries within it a substantive assertion that the only useful measure of preference intensity is a ranking of alternatives. If a one person claims to be near indifferent between the banana and the chocolate, but reliably chooses the chocolate, while another person claims to love chocolate and hate bananas, economic methodology declares the two equivalent and the verbal distinction of value (or observable differences in heart rates or skin tone or whatever that may accompany the choice) unworthy or unuseful to measure. It could be the case, for example, that a cardinal measure of preference intensity based on heart rates and brainwaves would predict behavior more effectively than a strictly ordinal measure (just as measuring the heat generated by a chemical reaction provides information useful in addition to the fact that the reaction does occur). But, wisely or not (I’m agnostic on the point), economists of the early 20th Century decided that mere rankings of choices offered a sufficient, elegant, and straightforwardly measurable basis for a scientific economics and that subjective or objective covariates that might be interpreted as intensity were best discarded. (Perhaps this will change with some “neuroeconomics”. Most likely not.)

An entirely useful and salutary effect of the reconceptualization was that it forced a distinction, blurred in traditional utilitarianism, between positive and normative conceptions of utility, or in the language now used, between “utility” and “welfare”. It rendered this distinction particularly obvious with respect to notions of aggregate welfare or utility. Ordinal values can’t meaningfully be summed. If we attach the value 3 utils to one individual’s chocolate bar and 300 utils to another’s, these numbers are arbitrary, and it does not follow that giving the candy to the second person will “improve overall well-being” any more than giving it to the first would. A scientific economics whose empirical data are “revealed preferences” — which, among multiple alternatives, does an individual choose? — has nothing analogous to measure with respect to the question of group choice. Given one chocolate bar and two individuals, the “revealed preference” of the group might be determined by which has the stronger fist, a characteristic that seems conceptually distinct from the unobservable determinants of action within an individual.

However, it is an error, and quite a grievous one, to interpret (as a commenter did) this limited use of “revealed preference” as a predictor of group behavior as an “ethical principle” of welfare economics. Strictly speaking, when we are talking about utility, there are no ethical principles whatsoever, just observations and predictions. Even within one individual, even when we can observe that an individual reliably chooses chocolate bars over bananas, it does not follow as ethical matter that supplying the chocolate in preference to the fruit improves well-being.

Within a single individual, to jump from utility to welfare, to equate satisfying a “preference” that is epistemologically equivalent to nature’s abhorrence of vacuum with improving an individual’s well-being in a morally relevant way requires a categorical leap, out of the realm of “scientific economics” and into what might be referred to as “liberal economics”. It is philosophical liberalism, associated with writers like John Stuart Mill and John Locke, that bridges the gap between observations about how people behave when faced with alternatives and “well being” in a morally relevant sense. The liberal conflation of revealed preference with well-being is deeply contestable and much contested, for obvious reasons. Should we attach moral force to the choice of a chocolate bar over a banana, even under circumstances where the choice seems straightforwardly destructive of the chooser’s health? Philosophical liberalism depends on a mix of a priori assumptions about the virtue of freedom and consequentialist claims about “least bad” outcomes given diverse preferences (in a subjective and morally important sense, rather than as a scientist’s shorthand for morally neutral observed or predicted behavior).

I don’t wish to contest philosophical liberalism (I am mostly a liberal myself), just to point out that it is contestable and not remotely “scientific”. However, philosophical liberalism permits a coherent recasting of value-neutral “scientific” economics into a normative welfare economics but only at the level of the individual. Liberal economics permits us to interpret the preference maximization process summarized by increased utility rankings as welfare maximization in a moral sense. A liberal economist can assert that a person’s welfare is increased by trading a banana for a chocolate bar, if she would do so when given the option. She can even try to overcome the strictly ordinal nature of utility and uncover a morally meaningful preference intensity by, say, bundling the banana with some US dollars and asking how many dollars would be required to persuade her to stick with the banana. There are a variety of such cardinal measures of welfare, which go under names like “compensating variation” (very loosely, how much a person would pay to get the chocolate rather than the banana) and “equivalent variation” (how much you’d have to pay the person to keep the banana, again loosely). However, what all of these measures have in common is that they are only valid within the context of a single individual making the choice. Scientifico-liberal economics simply has no tools for ranking outcomes across individuals, and the dollar value preference intensities that might be measurable for one individual are not commensurable with the dollar values that might be measured for some other unless one imagines that those dollars actually change hands.

Aha! So what if we imagine the dollars actually do change hands? Could that serve as the basis for a scientifico-liberal interpersonal welfare economics? In a project most famously associated with John Hicks and Nicholas Kaldor, economists strove to claim that, yes, it could! They were mistaken, irredeemably I think, although most of the discipline seems not to have noticed. The textbooks continue to present deeply problematic normative claims as scientific and indisputable. (See the previous post, and more to follow!)

But before we part, let’s think a bit about what it would mean if we find that we have little basis for interpersonal welfare comparisons. Or more precisely, let’s think about what it does not mean. To claim that we have little basis for judging whether taking a slice of bread from one person and giving it to another “improves aggregate welfare” is very different from claiming that it can not or does not improve aggregate welfare. The latter claim is as “unscientific” as the former. One can try to dress a confession of ignorance in normative garb and advocate some kind of precautionary principle, primum non nocere in the face of an absence of evidence. But strict precautionary principles are not followed even in medicine, and are celebrated much less in economics. They are defensible only in the rare and special circumstance where the costs of an error are so catastrophic that near perfect certainty is required before plausibly beneficial actions are attempted. If the best “scientific” economics can do is say nothing about interpersonal welfare comparison, that is neither evidence for nor evidence against policies which, like all nontrivial policies, benefit some and harm others, including policies of outright redistribution.

I do actually think we can do a bit better than plead ignorance, but for that you’ll have to wait, breathlessly I hope, until the end of our series.

Note: Unusually, and with apologies, I’ve disabled comments on this post. This is the first of a series of planned posts. I wish to write the full series, and I don’t have the discipline not to be deflected by your excellent responses. The final post in the series will have comments enabled. Please write down your thoughts and save them for just a few days!

Update History:

- 30-May-2014, 2:25 p.m. PDT: “that is epistemologically equivalent to

naturesnature’s abhorrence”, “just to point out that it isdeeplycontestable and not remotely” - 31-May-2014, 3:40 a.m. PDT: “tool invented by economists, a

astechnique” - 2-Jun-2014, 3:50 p.m. PDT: “rather than as

thea scientist’s shorthand”, “value-neutral “scientific”economiceconomics” - 5-Jun-2014, 6:55 p.m. PDT: “some pretty serious

misconceptionmisconceptions“