Represent

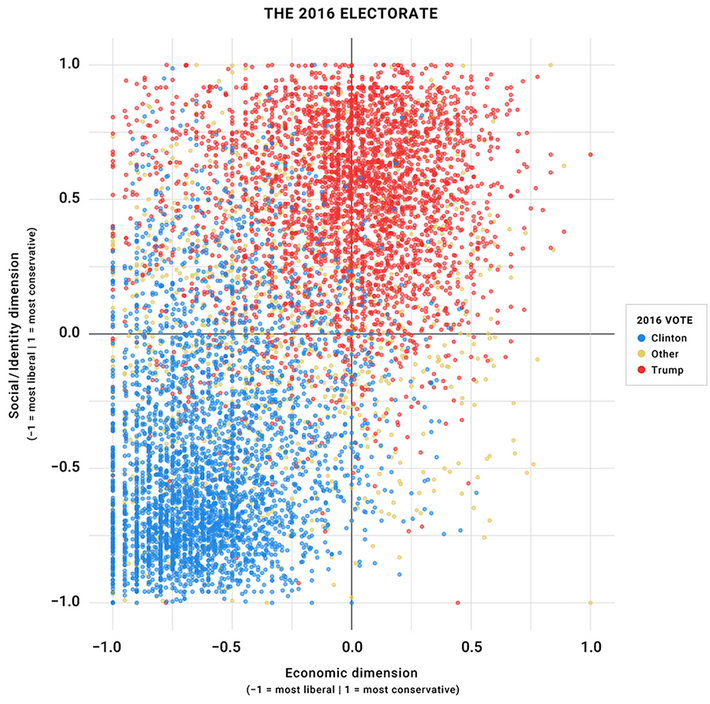

It has become a cliché to say that this election is not about electing a President, but preserving democracy in America. That’s much less because of Donald Trump than because of the “doom loops” (in Lee Drutman’s term) between the two political parties. It’s bad enough that we have only two parties that leave most of us poorly represented. But as the parties increasingly differentiate themselves across issues of identity and culture, the “winner-take-all” nature of our electoral and political institutions can no longer tempered by the horsetrading and difference-splitting. Our conflicts can no longer be resolved by compromises that leave us all feeling represented, albeit imperfectly, and fighting over matters of degree. Instead the parties have manufactured two distinct superidentities, and rehistoricized them as ancient hatreds. Both parties, and the partisans they’ve made us all, agree that we face a battle of good versus evil, sanity versus chaos, for the very soul of the American republic. Only one slight difference remains to be resolved: Which is which, who is who.

Framed this way, I am not neutral, I have a view, my own allegiances. But it is a catastrophe that we have framed things this way. Democracy is a sham, in practice and in theory, if half of the people are simply evil. And they are not, in any objective sense. Our horrible party system, a politics industry that caters to plutocrats, and insiders’ incentives to secure incumbency, have caused us to reconstruct ourselves in this way, yin to one another’s miserable yang.

One source of our discord is majoritarianism, which drives destructive competition between our political factions to win just a tiny margin over the other one. “Majority rules” is a deeply flawed basis for democratic institutions. It conflicts with a more functional virtue, representation, the idea that everybody should have a voice, no one’s interests should fall beneath consideration. Under strict majoritarianism, minorities have effectively no voice if a majority organizes to wield power. In liberal democracies, the most catastrophic harms of majoritarianism are (supposed to be) mitigated by minority rights, basic protections and liberties that even organized majorities are not permitted to violate. But even when those are respected, majoritarianism leaves minorities boxed out of any agency in the national project. When organized factions are nearly evenly matched (as our abysmal political institutions encourage), unsubverted majoritarianism would have 50% of the electorate plus 1 rule entirely, no matter how strenuous the objections of the other 50% – 1. This is not a functional or legitimate way to rule. (Our abysmal political institutions are not so straightforwardly majoritarian, so they don’t have that flaw, exactly.)

Opposing majoritarianism is not a defense of minoritarianism. The idea that some benighted minority should rule is obviously worse. But Republicans’ anti-majoritarian turn is itself a function of the structural majoritorianism of the institutions they contest. Majoritarian institutions invite the question “majority of whom?”, and the winner-take-all character of “majority rules” means the whole ballgame can turn on small changes in the always contested boundaries of the enfranchised. Under institutions where power is proportionate rather than binary, temptations to nibble at the edges of electoral eligibility or convenience disappear. Questions of suffrage become the fundamental questions about the nature of the polity that they ought to be, rather than playthings for party operatives looking to shave a few points from their opponents.

Representation is the ideal to which our political institutions should aspire, not “majority rules”. But representation must not be merely formal. Having “representatives” in a legislative body where they are always outvoted is not really representation at all. A democratic polity requires institutions that ensure the entire public is represented in outcomes, not merely in deliberations that become perfunctory or, even worse, performative, so the routinely outvoted become recklessly extreme, as compensation for impotence and in competition for press. Obviously, contending factions sometimes have mutually exclusive preferences, so that one faction must win and others must lose. But then you should win some and lose some, in proportion to your support among the general public. When possible, compromises should be crafted that attend in degrees to all of the public’s interests, and then passed with a high degree of legislative consensus. And such compromises usually are possible, when politics properly orients itself around conflicts in the material world, where differences can be split and priorities traded, rather than around questions of identity, morality, and status, questions on which contending factions must win or lose with nothing in between. Where compromises are not possible, we should have to take turns. Turn-taking should occur much more frequently than multiyear election cycles. Even within a single legislature, the majority should win only sometimes, and lose sometimes, in rough proportion to its dominance. There should be no possibility, none at all, of any faction dominating eternally, winning so big they need never fear reprisal for whatever they do to others. The golden rule must be a norm upheld and always enforceable between political factions. Our politics creates its public much more than the public creates our politics. We should seek a politics that constructs a public respectful of difference, that wields persuasion as its core implement of change.

Representation in the way I’ve described it can conflict with other virtues. There are tensions between turn-taking and the strategic coherence required to run an effective state. When everyone seems represented, it becomes harder to know whom to hold accountable, and how, for policy failures. It must be possible to take actions, sometimes to make big, contentious changes, and commit to them in ways that won’t immediately be reversed in stupid oscillations when the next turn is taken. American institutions already suffer from terrible status quo bias, and richer representation should not exacerbate that.

These are real issues, but they are (imperfectly) resolvable issues. Representation is the heart of democracy. Forms of majoritarianism that circumvent rather than support it should be abandoned. In the short term, for the United States, this means eliminating abominations like the “Hastert Rule” (which both parties and both chambers have now effectively adopted) in favor of legislative procedures designed to maximize enfranchisement, of the minority party and of diverse factions within the parties. In the medium term, it implies changing our electoral system to one that yields proportionate representation, rather than reshape the public into two bitterly contending factions. In the longer term, it implies innovations within the institutions of democracy that ensure turns are always taken except when strong consensus can be reached.

Note: I’ve just read Drutman’s book Breaking the Two-Party Doom Loop, which has helped color my thinking. I’d take issue with some aspects of the political history it tells, but it does a great job of explaining why our politics is such a mess, and casting a light forward towards the kinds of ideas that might remedy it. I heartily recommend it. We’d have a better world if it were more widely read.

Update History:

- 22-Sep-2020, 12:40 p.m. EDT: “…would have 50% of the electorate plus 1 rule entirely, no matter how strenuous the objections of the other 50% – 1.”; “I’ve

recentlyjust read Drutman’s book Breaking the Two-Party Doom Loop, which has helped color myrecentthinking.”